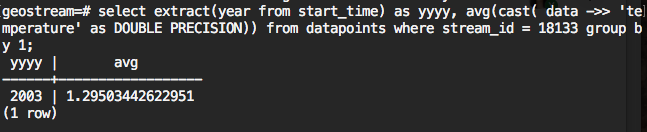

This SQL statement is computing average of the bins which created by grouping the extracted time component

"where" statement contains the filtering, it should include the stream_id (sensor_id), could include start time, end time, source .....

"group by" is to do the grouping, "by 1" meaning group by the first selection, i.e. extract(year from start_time) in this example. it can also by "by 1,2"....

"cast( data ->> 'temperature' as DOUBLE PRECISION)" is to find the 'temperature' in data and convert it to double format. Using the following SQL can make it more responsive ( just running once):

create or replace function cast_to_double(text) returns DOUBLE PRECISION as $$

begin

-- Note the double casting to avoid infinite recursion.

return cast($1::varchar as DOUBLE PRECISION);

exception

when invalid_text_representation then

return 0.0;

end;

$$ language plpgsql immutable;

create cast (text as DOUBLE PRECISION) with function cast_to_double(text); |

"avg" is to get the average, it usually used alone with "group by", you can have sum, count.....

by returning the average of the datapoints, short the time for streaming.

then we just need to convert each SQL result to a json.

This SQL statement pre-generate bins using "generate_series()" and tstzrange type; then it joins with datapoints table and groups by bins

with bin as (

select tstzrange(s, s+'1 year'::interval) as r

from generate_series('2002-01-01 00:00:00-05'::timestamp, '2017-12-31 23:59:59-05'::timestamp, '1 year') as s

)

select

bin.r,

avg(cast( data->> 'pH' as DOUBLE PRECISION))

from datapoints

right join bin on datapoints.start_time <@ bin.r

where datapoints.stream_id = 1584

group by 1

order by 1; |

This SQL statement pre-generate bins using "generate_series()" and tstzrange type; then it uses filter with avg function instead of join

with bin as (

select tstzrange(s, s+'1 year'::interval) as r

from generate_series('2002-01-01 00:00:00-05'::timestamp, '2017-12-31 23:59:59-05'::timestamp, '1 year') as s

)

select

bin.r,

avg(cast( data->> 'pH' as DOUBLE PRECISION)) filter(where datapoints.start_time <@ bin.r)

from datapoints, bin

where datapoints.stream_id = 1584

group by 1

order by 1;

|

Tested with GLGT production database. Used the stream_id 1584 which has 987,384 datapoints. Used "explain analyze"

| Method 1 | Method 2 | Method 3 | |

|---|---|---|---|

| Planning time | 0.269 ms | 0.259 ms | 0.145 ms |

| Execution time | 3069.059 ms | 6029.105 ms | 12008.801 ms |