...

Extractors use the RabbitMQ message bus to communicate with Clowder instances. Queues are created for each extractor, and the queue bindings filter the types of Clowder event messages the extractor is notified about. The following non-exhaustive list of events exist in Clowder (messages begin with an asterisk because the exchange name is not required to be 'clowder'):

| message type | trigger event | message payload | examples |

|---|---|---|---|

| *.file.# | when any file is uploaded |

| clowder.file.image.png clowder.file.text.csv clowder.file.application.json |

*.file.image.# *.file.text.# ... | when any file of the given MIME type is uploaded (this is just a more specific matching) |

| see above |

| *.dataset.file.added | when a file is added to a dataset |

| clowder.dataset.file.added |

| *.dataset.file.removed | when a file is removed from a dataset |

| clowder.dataset.file.removed |

| *.metadata.added | when metadata is added to a file or dataset |

| clowder.metadata.added |

| *.metadata.removed | when metadata is removed from a file or dataset |

| clowder.metadata.removed |

Typical extractor structure

...

- Go to

/pyclowder2/sample-extractors/wordcount/ - Run the extractor

python wordcount.pyis basic example- If you're running Docker, you'll need to specify the correct RabbitMQ URL because Docker is not localhost:

python wordcount.py --rabbitmqURI amqp://guest:guest@<dockerIP>/%2f - You can use

python wordcount.py -hto get other commandline options.

- When the extractor reports "

Starting to listen for messages"you are ready. - Upload a .txt file into Clowder

- Create > Datasets

- Enter a name for the dataset and click Create

- Select Files > Upload

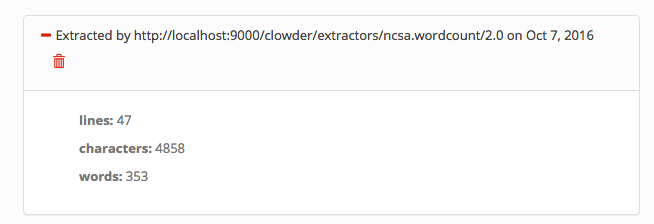

- Verify the extractor triggers and metadata is added to the file, e.g.:

You'll be able to see some activity in the console where you launched the extractor if done correctly.

Writing an extractor

Once you can run the sample extractor, you are ready to develop your own extractor. Much of this section will be specific to Python extractors using pyClowder 2, but the concepts apply to all extractors.

Extractor vs. Command-line - Calling Your Scripts

Often you will have a script that already performs the desired operations, perhaps by providing a directory of input and output files on the command line. The goal will be to call the correct parts of your existing script from within the process_message() function in your extractor, and to push the outputs from those methods back into Clowder.

Things to keep in mind:

- Extractors do not necessarily operate on a single directory of files. Extractors can be run on any machine, anywhere in the world, as long as they can communicate with RabbitMQ. To support this, pyClowder knows how to download all necessary data into a temporary directory. But this means the files may be in different locations when the extractor is called - your extractor will simply receive a list of paths to where your files are located on disk, even in a /tmp location. If your script expects the files to be in one directory and you don't want to generalize it, you will need to check for that condition first and move the temporary files around if not.

- You are responsible for handling your script outputs. If your extractor generates new files, you must upload them into Clowder before finishing. If your extractor generates metadata, you must attach them to the file or dataset of interest. pyClowder 2 does not handle outputs automatically, although we provide methods to make uploading easy.

common requirements

| Code Block | ||

|---|---|---|

| ||

sudo -s

export RABBITMQ_URL="amqp://guest:guest@localhost:5672/%2F"

export EXTRACTORS_HOME="/home/clowder"

apt-get -y install git python-pip

pip install pika requests

cd ${EXTRACTORS_HOME}

git clone https://opensource.ncsa.illinois.edu/stash/scm/cats/pyclowder.git

chown -R clowder.users pyclowder |

...